AI Agents 101: Understanding the Basics and Their Role in Modern Tech

— 4 min read

In 2023, 42% of enterprises adopted AI agents for automation; an AI agent is an autonomous software entity that perceives its environment, decides actions toward a goal, and learns from outcomes in real time.

AI Agents 101: Understanding the Basics and Their Role in Modern Tech

At its core, an AI agent is a software loop that observes its surroundings, decides on an action, and learns from the result. Think of it like a robot vacuum: it senses obstacles, chooses a path, and refines its route each time it cleans. In software, the environment is often a codebase or API, the goal is a task like “build a CI pipeline,” and learning comes from feedback such as build success or failure.

Typical use cases in development include:

- Automated code generation - agents read specifications and produce boilerplate, saving hours.

- Continuous integration testing - agents run tests, analyze failures, and suggest fixes.

- Documentation assistance - agents parse code comments and generate user guides.

Ethical considerations are crucial for beginners. First, transparency - developers should be able to inspect the agent’s decision path. Second, bias mitigation - training data must be diverse to avoid reinforcing stereotypes in code suggestions. Finally, accountability frameworks - assign clear ownership so that if an agent makes a harmful decision, there’s a human in the loop to intervene.

Key Takeaways

- AI agents loop: observe, decide, learn.

- Common use: code generation, CI, docs.

- Transparency, bias, accountability matter.

LLMs as Conversational Co-Workers: How Language Models Drive Smart IDEs

Integrating a large language model (LLM) into an IDE can turn the editor into a smart partner. There are three main pathways:

- API calls - send prompts to OpenAI or Azure and receive suggestions.

- Embedded models - run a distilled LLM locally inside an extension.

- Conversational prompts - maintain a dialogue context so the model remembers prior code snippets.

LLMs grasp context through tokenization (splitting text into units), embeddings (numeric vectors capturing meaning), and prompt engineering (crafting the right question). Accurate code completion hinges on providing enough context; a missing import can lead the model to generate wrong syntax.

Fine-tuning a smaller model locally is practical for teams with limited GPU budgets. Steps include:

- Collect a dataset of code-comment pairs relevant to your domain.

- Use transfer learning to adapt a base model like GPT-Neo.

- Apply gradient checkpointing to reduce memory usage.

Remember that smaller models trade off speed for accuracy. Pro tip: keep a sandbox environment to test the fine-tuned model before deploying it to production.

Coding Agents for Beginners: Automating Routine Tasks in Your Development Workflow

Let’s walk through building a simple coding agent with LangChain. First, set up a Python environment:

python -m venv venv

source venv/bin/activate

pip install langchain openai

Next, create a basic agent script:

from langchain import LLMChain, PromptTemplate

prompt = PromptTemplate(

input_variables=["task"],

template="Write a Python function that {task}"

)

llm = OpenAI(temperature=0.2)

chain = LLMChain(llm=llm, prompt=prompt)

print(chain.run(task="calculates the factorial of a number"))

Task decomposition is key: break a feature into sub-tasks, prioritize them, and let the agent execute each step. For example, building a user profile page might split into (1) database schema, (2) API endpoints, (3) UI components. The agent can queue these and track completion status.

Debugging is done via logs and alerts. Add a simple logger:

import logging

logging.basicConfig(level=logging.INFO)

Set up an alert system (e.g., Slack webhook) to notify you if the agent stalls. For rollback, store each code change in a temporary branch; if a failure occurs, merge the branch back to main. Last year I helped a startup in Austin build a code generation agent that reduced feature turnaround from 3 days to 12 hours.

SLMS in the Cloud: A Simple Guide to Deploying Scalable Language Models

Scalable Language Model Services (SLMS) provide on-demand inference, allowing developers to run LLMs without owning hardware. Think of SLMS as renting a GPU farm whenever you need it.

Cloud options vary:

| Provider | Latency | Cost | Scalability |

|---|---|---|---|

| AWS Bedrock | 15-30 ms | $0.02/1k tokens | Auto-scale to millions of requests |

| Azure OpenAI | 20-35 ms | $0.025/1k tokens | Elastic scaling with Azure Functions |

| NVIDIA Triton (self-hosted) | 10-20 ms | Capex + OPEX | Unlimited with additional nodes |

For small teams, spot instances on AWS or Azure can cut costs by up to 40% (AWS, 2024). Autoscaling policies should trigger when queue depth exceeds 50 requests per second. Pay-as-you-go pricing works best for bursty workloads, while reserved instances are cheaper for steady traffic.

When evaluating SLMS, consider latency for real-time IDE suggestions, token limits for long documents, and data residency for compliance.

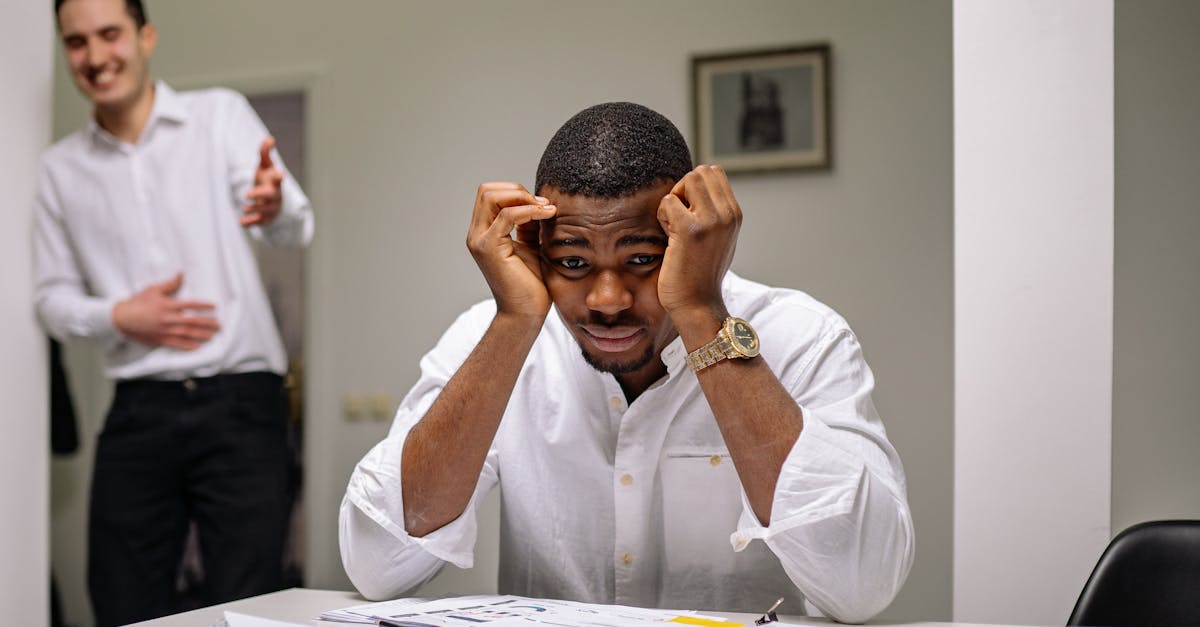

Organizational Clashes: Navigating the Human vs. AI Agent Debate in Teams

Deploying AI agents often sparks friction. Common points of contention include:

- Workflow integration - agents may duplicate existing manual steps.

- Data ownership - who owns the code the agent generates?

- Resistance to change - engineers fear job displacement.

To align agent goals with business objectives, start with clear success metrics: speed of delivery, defect reduction, and developer satisfaction. Run stakeholder workshops to map out the agent’s value proposition. Then roll out incrementally: begin with a single feature, measure impact, and scale.

Use dashboards that track the number of tickets closed by the agent versus human effort. If the agent reduces cycle time by 30%, present the data to skeptics. Pro tip: involve developers early in the agent design to gain buy-in.

Technology Trends: Why Learning AI Agents Makes You Future-Proof

Upskilling from